10 Best Practices to Secure PostgreSQL AWS RDS/Aurora

Author: Shailesh Rangani | 7 min read | July 21, 2020

AWS supports PostgreSQL versions 9.4 through 12 on RDS and 9.6 through 11 on Aurora. Many organizations are migrating to PostgreSQL RDS or Aurora in order to take advantage of availability, scalability, performance, etc. and are doing it as either a heterogeneous or a homogeneous migration.

Security and Compliance is a shared responsibility between AWS and the customer:

- AWS is responsible for security “OF” the cloud

- Customer is responsible for security “IN” the cloud.

WS RDS & Aurora Security Best Pest Practices

When it comes to dealing with data in the cloud, security is a key aspect. Data breaches or data privacy is not surprising topics in today’s world, in the first six months of 2019 alone 4.1 billion records were exposed in data breaches. When thinking of securing PostgreSQL RDS or Aurora in AWS, below are the top 10 points that come to mind as a priority. Let’s go walk through them one at a time.

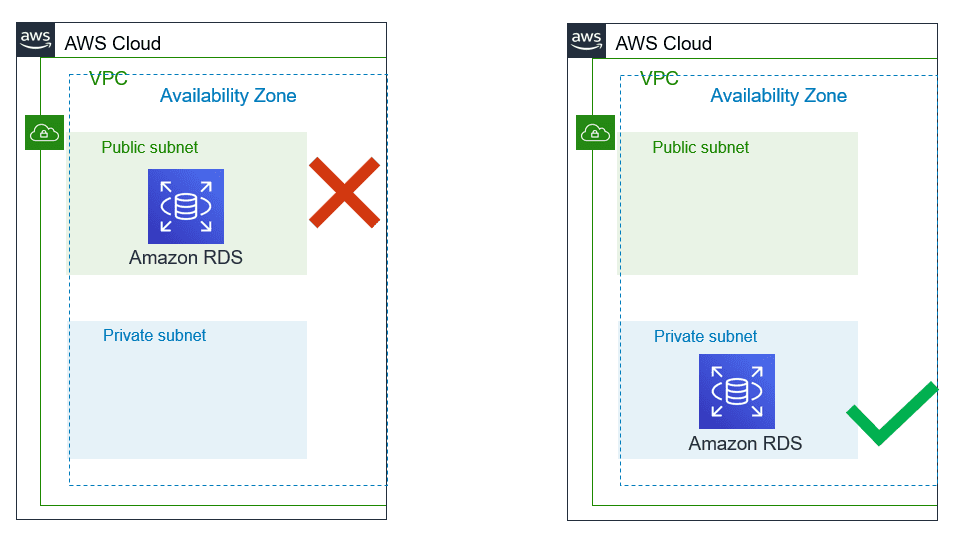

1. DB Subnet Group with Private Subnets:

DB Subnet group is mandatory configuration while creating RDS and each DB subnet group should have subnets in at least two Availability Zones in each AWS Region. Make sure these subnets are private until there is an explicit need of accessing RDS database from the public network based on use-case.

Quick validation way is:

- Internet gateway is not associated with VPC/subnets/routes.

- Public IP is not assigned to RDS instances.

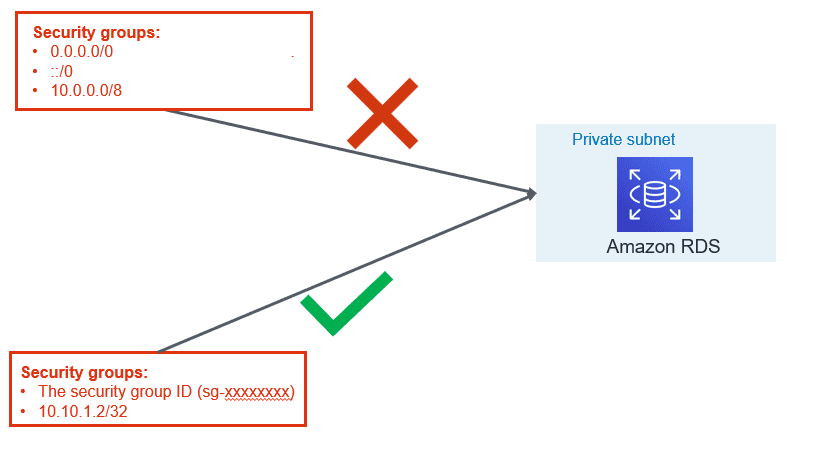

2. Managing Security Groups:

VPC security group are like firewall at the subnet level which controls access to DB instances in VPC. It plays a significant part in managing who all can access RDS instance.

- Use application servers’ security group names instead of an individual IP addresses or range of IP addresses. This will allow inbound traffic ONLY from network interfaces (and their associated

- instances) that are assigned to that security group.

- If there is a specific need for giving access for a specific server, include only that IP in the security group instead of range of that IP.

- Avoid giving access for desktop, it is recommended that Baston server is used for RDS access. Tools like PSQL client or pgAdmin should be installed on bastion hosts for Administrative needs for the database administrators.

- Within PostgreSQL it is best practice to use least privileged defined roles for specific purpose (i.e. read role, data modification role, monitoring role, etc.)

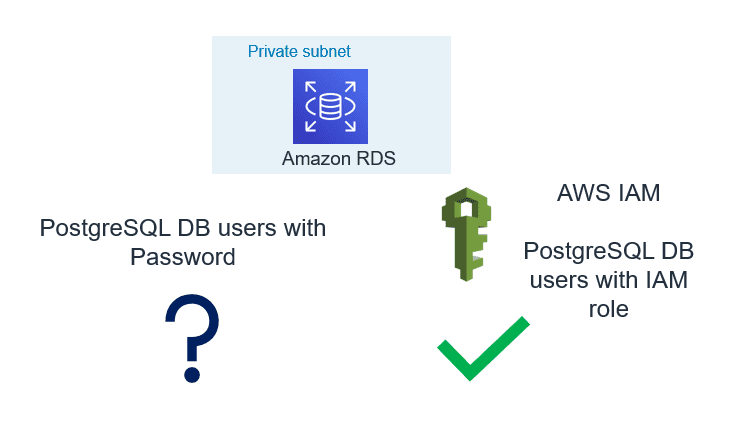

3. Use IAM Database Authentication:

AWS RDS and Aurora support authentication to the database using IAM user or role credential. IAM authentication is secure than the traditional method of authentication because:

- No need to generate a password while creating a database user.

- SSL is must while using IAM authentication and that make sure in-transit data is encrypted

- Automatic rotation of token since the token is valid only for 15 minutes

Please refer to our blog “AWS IAM to Authenticate Against RDS Instances & Aurora Clusters” for more information.

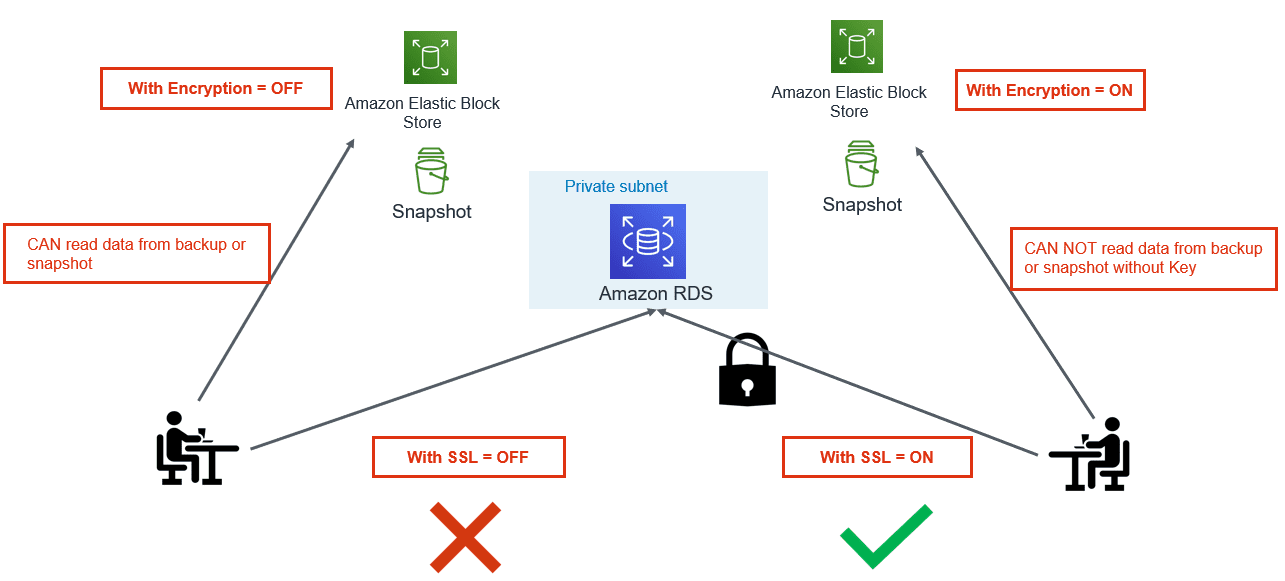

4. Enable Encryption and Force SSL:

While Data breaches and Cybersecurity breaches a growing concern, using cloud native encryption options can be savior. AWS provides various options to encrypt data at rest and in-transit.

- Encryption at Rest: Use AWS KMS to encrypt RDS and Aurora databases. Once the database is configured with encryption, data stored in the storage layer gets encrypted. Automated-backups, read-replicas and snapshots also get encrypted if you are using encrypted storage.

- Encryption in Transit: PostgreSQL natively supports SSL connections to encrypt client-server communications. Check the DB instance configuration for the value of the force_ssl parameter. By default, the rds.force_ssl parameter is set to 0 (off). If the rds.force_ssl parameter is set to 1, clients are required to use SSL/TLS for connections. If you are using pgBouncer, you can use various authentication methods including TLS/SSL client certificate authentication.

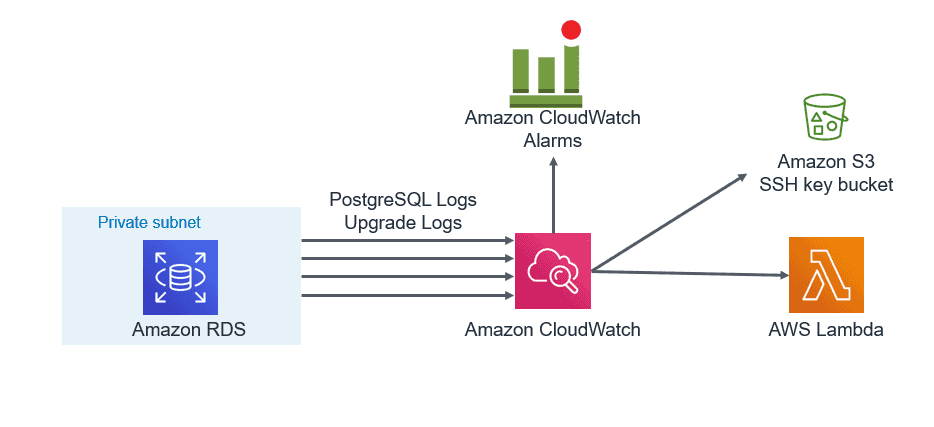

5. Export PostgreSQL Logs to CloudWatch:

You can now publish your Amazon RDS for PostgreSQL logs to CloudWatch Logs. Supported logs include both upgrade logs and PostgreSQL logs. Publishing these logs to CloudWatch allows you to maintain continuous visibility into database errors and activity.

- A database administrator can set up CloudWatch alarms to notify them of frequent restarts, failed login attempts that are recorded in the error log.

- One can create alarms for errors or warnings recorded in PostgreSQL logs. These logs can be stored in to S3 bucket for longer retention.

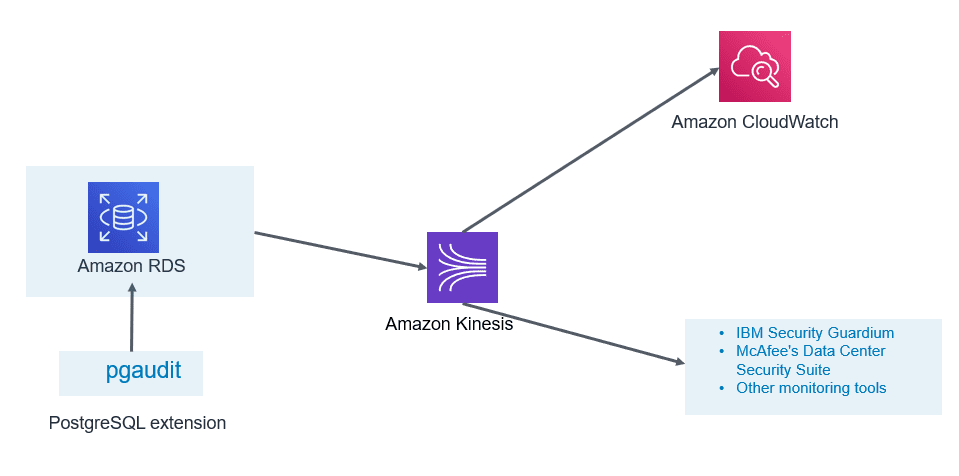

6. Enable Auditing:

- PostgreSQL Aurora supports Database Activity Streams and pgAduit extension.

- Database activity streams can be integrated with monitoring tools for real time monitoring and notifications.

- This can help in identifying unwanted occurrences and take corrective action as soon as such occurrences are identified.

- These features can help you to meet compliance and regulatory requirements.

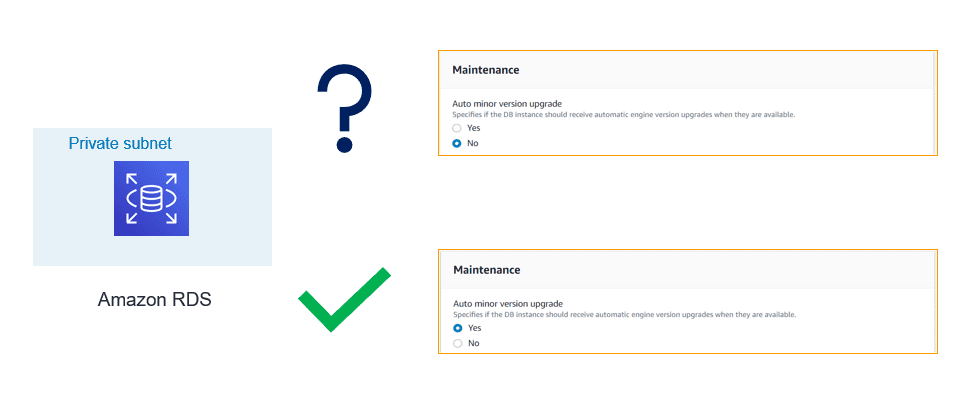

7. Timely Patching:

If the database can sustain outage for a few minutes during the maintenance window, you should enable automatic patching of PostgreSQL minor release. If database cannot afford outage, in that case, plan for the scheduled outage but make sure minor patches are applied regularly.

- Major releases usually bring new functionalities and features

- Minor releases fix frequently encountered bugs, security issues, and data corruption problems to reduce the security risk.

Make sure you keep a tab on the latest venerability announced by various organizations.

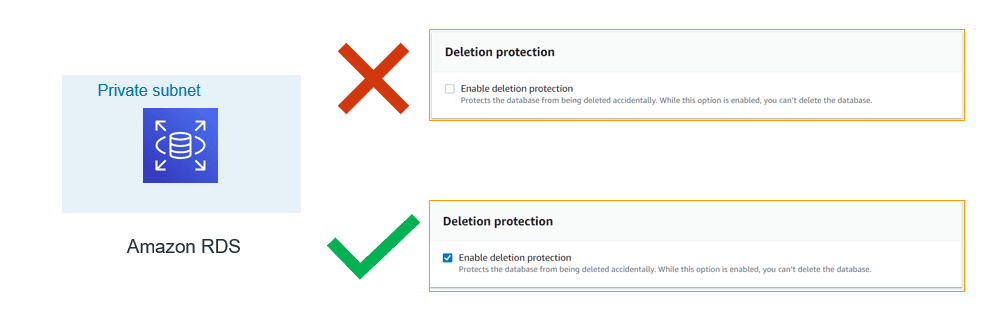

8. Enable Deletion Protection:

- If delete protection is enabled and you tried deleting the RDS instance, your request will fail. To continue with your request, you need to first modify the instance and disable deletion protection. This configuration is a helpful if this delete request is accidental.

- If you are using tools like Terraform or CloudFormation to support infrastructure as code and automation. You can configure respective flags to enable delete protection.

- For the production environment, it is highly recommended to enable this flag.

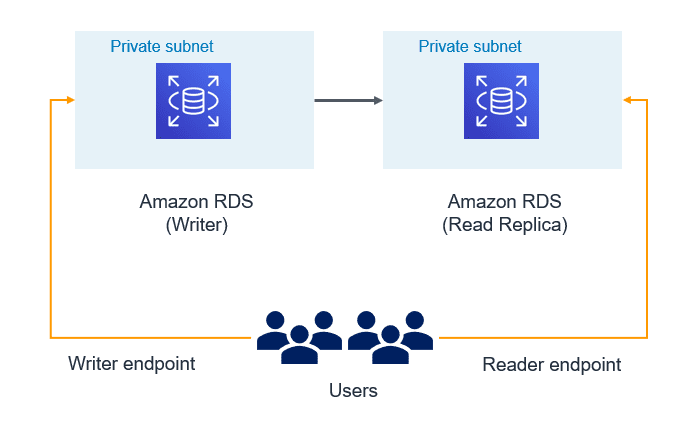

9. Use Database Endpoints:

- If read replica is configured and application is intended for read-only workload then make sure reader endpoint is used at application configuration. This would make sure data modifications (DML) don’t get performed by the unintended or accidental operations.

- Use writer/cluster endpoint only for those applications which need data modifications. If there is need, you may even create custom endpoint for specific application or use case.

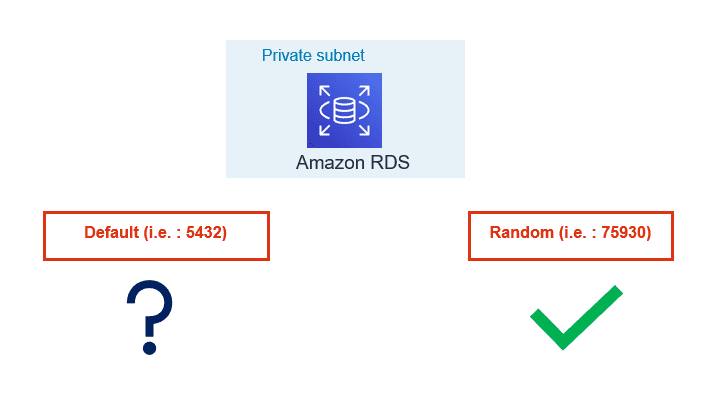

10. Use custom port:

- By default, Oracle listens on 1521, SQL Server on 1433, and MySQL on 3306. In a similar way PostgreSQL by default gets configured with 5432 TCPIP port. Historically as part of security best practice, it has been recommended not to use the default ports for connections to any DB Server.

- Automated attacks are configured to try finding services running on default ports and you can be lucky if your DB services are running on a non-default port. AWS RDS/Aurora allows you to configure the database with a non-default port.

- Many organizations have the enterprise-level practice to use non-default ports as security guidelines. If your organization is using Cloud formation or Terraform, modifying default port can be an easy way to standardize on those templates.

AWS provides various features and integration to harden security along with auditing and real time monitoring of PostgreSQL RDS/Aurora.

Following the best security practices discussed allows only authorized users and applications have access to the database. If you have inquiries or need assistance on analyzing PostgreSQL RDS/Aurora clusters from a security perspective, contact us. Datavail provides 24×7 support for your database environments.