Node Management in Cassandra: Ensuring Scalability and Resilience

Author: Satish Rakhonde | 9 min read | December 28, 2023

Cassandra is a highly scalable and distributed NoSQL database that is known for its ability to handle large volumes of data across multiple commodity servers. As an administrator or developer working with Cassandra, understanding node management is crucial for ensuring the performance, scalability, and resilience of your database cluster. In this blog post, we will delve into the intricacies of node management in Cassandra and explore various aspects that need to be considered to effectively manage the nodes in your Cassandra cluster.

Understanding Nodes in Cassandra

In Cassandra, a node refers to an individual server that stores data and participates in the distributed architecture of the database cluster. Each node is responsible for a portion of the data, and data is distributed across multiple nodes using a mechanism called partitioning. It is important to grasp the concepts of replication factor, data distribution, and token assignment to effectively manage nodes in Cassandra.

Adding and Removing Nodes

Adding or removing nodes from a Cassandra cluster requires careful planning and execution to maintain data availability and consistency. When adding a new node, it is essential to configure the appropriate replication factor and ensure proper data distribution across the new node. Similarly, when removing a node, data must be rebalanced across the remaining nodes to maintain optimal performance and fault tolerance.

Bootstrapping and Decommissioning Nodes

The process of adding a new node to an existing Cassandra cluster is known as bootstrapping. During bootstrapping, the new node joins the cluster, receives data for its assigned token range, and establishes communication with other nodes. On the other hand, decommissioning involves removing a node gracefully from the cluster by redistributing its data to other nodes. Proper bootstrapping and decommissioning procedures are essential to maintaining data integrity and minimizing disruptions during cluster expansion or contraction.

Below are some steps:

Bootstrapping a Node

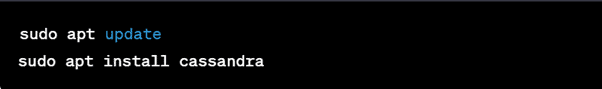

- Install Cassandra on the new node by following the installation instructions specific to your Linux distribution. For example, on Ubuntu, you can use the following commands:

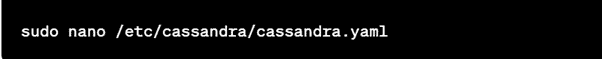

- Once Cassandra is installed, edit the `cassandra.yaml` configuration file located in the `/etc/cassandra/` directory:

- In the `cassandra.yaml` file, update the following properties to configure the new node:

– cluster_name: Set the name of your Cassandra cluster.

– seeds: Specify the IP addresses of the existing seed nodes in the cluster. These are the nodes that the new node will contact to join the cluster. - Save the changes to the `cassandra.yaml` file and exit the text editor.

- Start the Cassandra service on the new node:

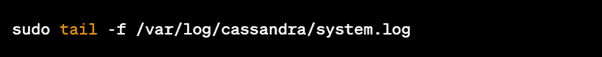

- Monitor the system logs to ensure that the new node successfully joins the cluster. You can view the logs using the following command:

Decommissioning a Node

- SSH into the node that you want to decommission from the Cassandra cluster.

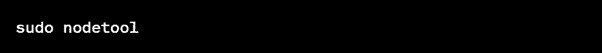

- Open the Cassandra nodetool by running the following command:

- Inside the nodetool command-line interface, enter the following command to decommission the node:

- Monitor the output to ensure that the decommissioning process completes successfully.

- Once the decommissioning process is finished, stop the Cassandra service on the node:

- Restart the Cassandra service on the remaining nodes in the cluster to ensure data redistribution and replication:

Repair and Maintenance

Regular repair and maintenance operations are crucial for keeping a Cassandra cluster healthy and performing optimally. Repair ensures data consistency across nodes by validating and synchronizing replicas. It is essential to schedule regular repairs and implement an appropriate strategy based on the size of the cluster and data volume. Additionally, performing routine maintenance tasks such as compaction, disk space management, and monitoring node performance is essential for a stable and reliable Cassandra cluster.

Below are some steps:

Repairing a Node

- SSH into the node that you want to repair.

- Open a terminal and run the following command to start the nodetool utility:

- By default, this command repairs all keyspaces on the node. If you want to repair a specific keyspace, use the following command instead:

- Monitor the output to observe the progress of the repair operation. The nodetool repair command streams data between nodes to ensure data consistency.

- Once the repair is completed, you can check the repair status using the following command:

Maintenance Operations

- Running Cleanup:

- To clean up deleted data and reclaim disk space, you can run the following nodetool command:

- This command removes data that has been marked as tombstones (deleted data) and is no longer needed.

- Running Nodetool Flush:

- To flush memtables to disk manually, you can use the following command:

- This command forces the memtables to be written to disk as SSTables, which can help reduce the recovery time in case of node failures.

- Running Nodetool Compact:

- To manually trigger compaction on a node, you can use the following command:

- This command compacts SSTables to optimize disk space and improve read performance.

- Running Nodetool Upgradesstables:

- If you’ve upgraded Cassandra and want to upgrade SSTables to the latest version, you can run the following command:

- This command upgrades SSTables to the current version and improves read performance.

Monitoring and Alerting

To effectively manage nodes in Cassandra, continuous monitoring and alerting are essential. Monitoring tools such as DataStax OpsCenter, Prometheus, or Grafana can provide insights into the performance, availability, and health of individual nodes and the cluster as a whole. Administrators can identify potential issues and take necessary actions before they escalate by proactively monitoring key metrics such as latency, throughput, disk usage, and resource utilization.

Below are some steps:

- Monitoring Node Status:

- To check the status of a Cassandra node, open a terminal and run the following command:

- This command provides information about the status of the node, including its load, token ranges, and the status of other nodes in the cluster.

- Viewing Cluster Information:

- To get an overview of the Cassandra cluster and its nodes, run the following command:

- This command provides information about the cluster name, partitioner, snitch, and other configuration details.

- Monitoring Node Performance:

- To view various performance metrics of a node, such as CPU usage, memory usage, and read/write latency, you can use the following command:

- This command provides thread pool statistics, including pending tasks, active tasks, and completed tasks.

- Monitoring Compaction:

- To monitor the progress and statistics of compaction processes in Cassandra, run the following command:

- This command displays details about compaction tasks, including pending compactions, completed compactions, and their progress.

- Monitoring Pending Read/Write Tasks:

- To check the number of pending read and write tasks in the node, use the following command:

- This command provides information about the pending tasks in the read and write thread pools.

- Generating a Diagnostic Report:

- If you need to generate a diagnostic report for troubleshooting or debugging purposes.

Alerting

Cassandra does not have built-in alerting capabilities, but you can leverage third-party monitoring tools or integrate Cassandra with monitoring systems like Prometheus, Grafana, or DataDog. These tools provide more advanced monitoring features, including alerting based on custom thresholds and metrics.

Scaling and Load Balancing

As your data volume and user base grow, scaling your Cassandra cluster becomes inevitable. Cassandra supports horizontal scaling by adding more nodes to the cluster, which allows you to handle increased data traffic and maintain performance. Proper load balancing ensures that data is evenly distributed across nodes, maximizing throughput and minimizing hotspots. Techniques such as virtual nodes (vnodes) and consistent hashing help automate the process of load balancing and scaling in Cassandra.

Conclusion

Efficient node management is crucial for ensuring the scalability, resilience, and performance of your Cassandra cluster. Understanding the concepts and best practices related to adding, removing, bootstrapping, decommissioning, repairing, and maintaining nodes is essential for database administrators and developers. By following the guidelines outlined in this blog post, you can effectively manage nodes in Cassandra and build a robust, distributed database infrastructure capable of handling your growing data needs.